OpenAI is once again under scrutiny, with new claims suggesting it may have used copyrighted books without permission to train its AI models. A recent paper by the AI Disclosures Project alleges that OpenAI relied on paywalled books from O’Reilly Media to develop its advanced GPT-4o model.

AI models learn by processing large amounts of data, including books, articles, movies, and online content. They recognize patterns and generate responses based on the information they have absorbed. However, training AI on protected content without proper licensing raises serious legal and ethical concerns.

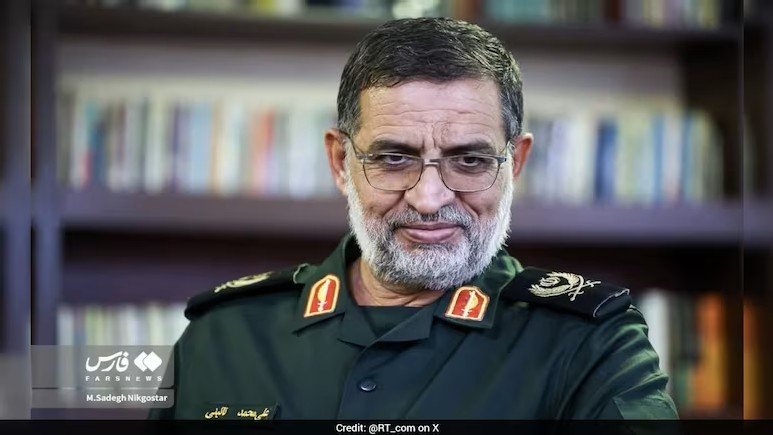

The paper, co-authored by Tim O’Reilly, Ilan Strauss, and Sruly Rosenblat, claims that GPT-4o exhibits a high level of familiarity with restricted O’Reilly book content compared to its predecessor, GPT-3.5 Turbo. The researchers used a method called DE-COP, designed to detect whether a model has prior knowledge of copyrighted material. Their analysis suggests that GPT-4o likely trained on non-public O’Reilly books published before its training cutoff date.

Although the study does not provide definitive proof, it raises important questions. The authors acknowledge that OpenAI may have acquired the text from user inputs rather than direct data scraping. Additionally, newer models like GPT-4.5 and o3-mini were not included in the study, leaving some uncertainty about OpenAI’s latest training practices.

OpenAI has previously advocated for more flexible copyright rules in AI development. The company also pays for some of its training data, securing licensing agreements with publishers and media platforms. However, it faces multiple lawsuits over its data collection practices, and this latest report could add more pressure.

As AI companies continue to seek high-quality training data, the balance between innovation and copyright law remains a major debate. With growing concerns about data transparency and AI ethics, the industry is likely to face increasing legal and regulatory scrutiny.